Angles

Nano Banana Pro

FLUX 2

Trending Tools

Bytedance Seedream v5 Lite

Bytedance Seedream 5.0 Lite is a fast and efficient text-to-image generation model designed to produce high-quality, detailed images from natural language prompts. It is optimized for speed, scalability, and cost-efficiency, making it ideal for workflows that require quick image generation without sacrificing visual clarity. This model excels at understanding complex prompts, enabling users to generate photorealistic images, creative compositions, stylized visuals, and detailed scenes across a wide range of use cases. It supports flexible image sizing (up to ~2K resolution), multi-image generation, and safety filtering, making it suitable for both creative and production environments. Seedream 5.0 Lite is best suited for high-volume image generation, rapid prototyping, social media content creation, and general-purpose AI image workflows. It provides a strong balance between performance, quality, and affordability, making it a reliable choice for developers, creators, and AI platforms. This model should be selected when users want to generate images quickly from text prompts at scale, rather than focusing on ultra-premium or highly stylized outputs.

Image Apps v2 Hair-change

Change hairstyles and hair colors in photos realistically.

Kling-video v2.1 Master

Kling 2.1 Master designed for top-tier image-to-video generation with unparalleled motion fluidity, cinematic visuals, and exceptional prompt precision.

Bytedance Seedream v4 Edit

Advanced AI-powered image-to-image editing model by ByteDance specializing in complex, instruction-based transformations of existing images. Accepts up to 10 reference images simultaneously for sophisticated multi-image editing workflows. Excels at contextual scene modifications including object insertion (add cats, people, furniture), clothing and wardrobe changes on models, background replacement with specific era or location styles (Victorian buildings, modern cityscapes), environmental transformations, and element addition while maintaining natural composition. Supports natural language editing commands for intuitive manipulation - dress models in specific outfits, change weather conditions, add accessories, modify lighting, or completely reconstruct scenes. Generates multiple variations per prompt (up to 6 images per generation) across all standard aspect ratios and custom dimensions up to 14142px. Features dual prompt enhancement modes: standard for highest quality results and fast for quicker turnarounds. Ideal for fashion e-commerce product staging, real estate virtual staging, advertising campaign variations, social media content adaptation, product photography enhancement, character outfit variations, scene composition testing, and creative concept exploration requiring precise control over existing visual assets.

Flux-2 Ballpoint-Pen-Sketch

Authentic ballpoint pen sketch generator powered by Flux 2 with specialized LoRA for hand-drawn pen illustration aesthetics. Creates realistic pen sketches with characteristic ink strokes, cross-hatching, and the distinctive look of blue or black ballpoint pen on paper. Perfect for portraits, urban sketches, architectural drawings, and artistic hand-drawn style imagery. Uses 'b4llp01nt' trigger word for optimal results. Adjustable sketch intensity via lora_scale (0-2). Best for: pen sketches, hand-drawn illustrations, portrait sketches, urban sketching, architectural drawings, notebook doodles, ink illustrations, artistic renderings, storyboard art, and traditional drawing aesthetics. Style: Realistic ballpoint pen on paper with authentic stroke patterns.

Image Apps v2 Product-holding

Place products naturally in a person’s hands for realistic marketing visuals.

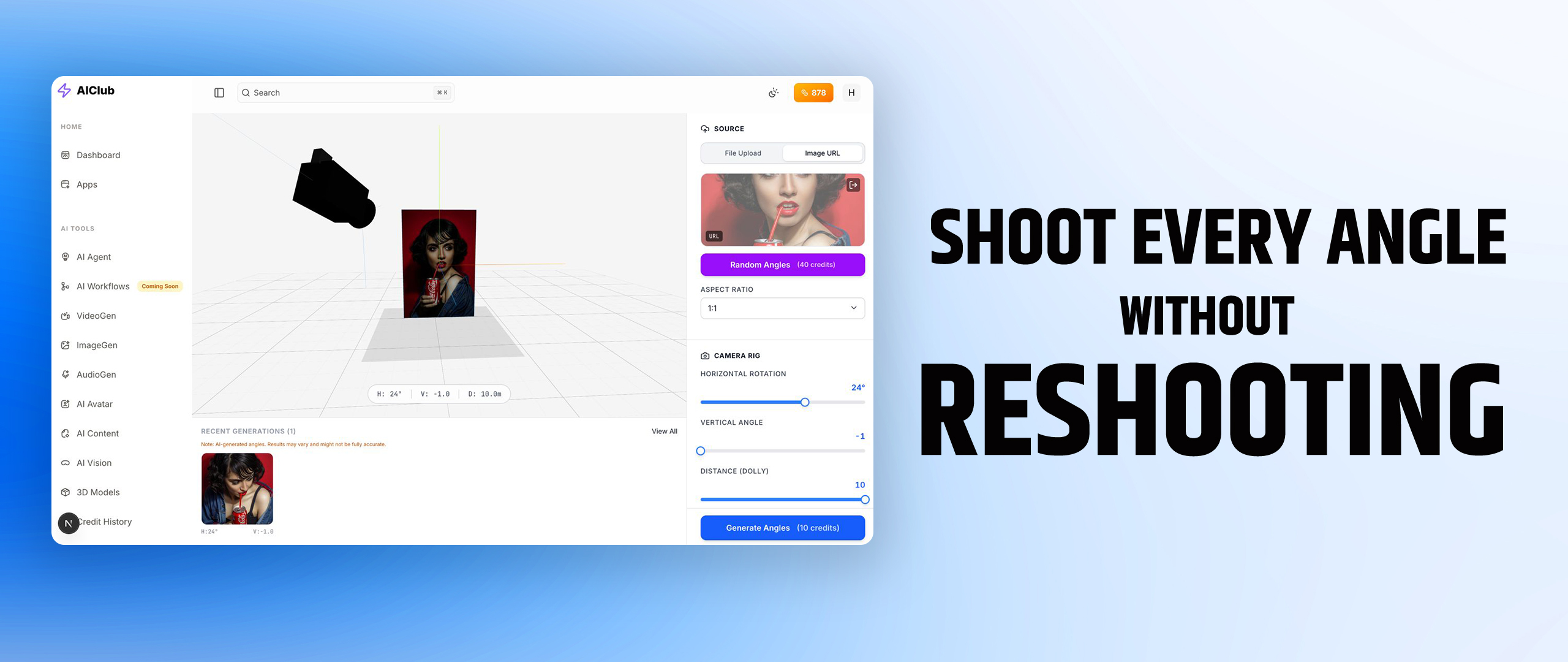

Flux-2 Multiple-Angles

AI-powered camera angle transformation that generates the same object from different viewpoints. Rotate objects horizontally (0-360° azimuth), adjust vertical elevation (0-60°), and control zoom distance (wide to close-up). Perfect for product photography, 3D asset previews, e-commerce multi-angle views, and object visualization from any perspective. Powered by Flux 2 with specialized LoRA for consistent object identity across angle changes. Best for: product 360° views, multi-angle product shots, object rotation, camera perspective changes, e-commerce photography, 3D preview generation, turntable-style images, front/side/back views, and consistent object visualization from different angles. Category: Image-to-Image transformation.

Ideogram V2

Industry-leading text-to-image model by Ideogram AI with exceptional typography rendering and text accuracy capabilities. Stands out as the #1 choice for generating images with embedded text, logos, and typographic designs. Design style significantly boosts text rendering precision for creating premium graphic designs with long, stylized text - perfect for greeting cards, print-on-demand products, t-shirt designs, posters, promotional materials, and marketing content. Outperforms competitors including Flux Pro and DALL·E 3 in image-text alignment, overall subjective preference, and text rendering accuracy based on human evaluations. Offers multiple specialized styles: Realistic (photorealistic images with lifelike textures and human features), Design (optimized for graphic design and typography), 3D (three-dimensional rendering), and Anime (cartoon/animation style). Features precise color palette control for brand consistency and artistic control. Supports flexible aspect ratios including 10:16, 16:10, 9:16, 16:9, 4:3, 3:4, 1:1, 1:3, 3:1, 3:2, 2:3. MagicPrompt functionality automatically expands and optimizes prompts for better results. Ideal for logo design, poster creation, product packaging, merchandise design (mugs, apparel), flyers, menus, social media graphics, book covers, invitations, brand identity materials, advertising campaigns, and any professional graphic design project requiring clear, legible, perfectly positioned text within images. Perfect for graphic designers, print-on-demand businesses, marketing teams, and content creators needing typography-heavy visual content.

Seedance v1 Pro Fast

Advanced AI image-to-video generation model by ByteDance specialized in multi-shot narrative storytelling with exceptional motion stability and stylistic versatility. Excels at creating cohesive animated sequences with multiple scenes while maintaining character consistency, visual style, and atmospheric coherence across shot transitions and temporal-spatial shifts. Outstanding at smooth, stable motion generation with wide dynamic range - from subtle micro-expressions and gentle movements to large-scale dynamic actions - all maintaining physical realism and compositional integrity. Features native multi-shot storytelling capability generating narrative videos with seamless transitions between different scenes, camera angles, and time periods while preserving subject identity and thematic unity. Supports diverse stylistic expressions including photorealism, cyberpunk aesthetics, illustration styles, felt-texture stop-motion, cel-shaded anime, and cinematic looks through accurate interpretation of style prompts. Advanced prompt adherence for complex action sequences, multi-character interactions, and detailed camera movements. Ideal for animated short films, narrative content, storytelling videos, character-driven sequences, multi-scene animations, cinematic shorts, social media storytelling, music videos, TikTok narratives, Spotify Canvas, creative video projects, marketing stories, brand narratives, and stylized visual content. Perfect for filmmakers, animators, content creators, music video producers, social media creators, marketers, brand storytellers, and creative professionals requiring cinematic multi-shot video generation with consistent characters, seamless transitions, and diverse artistic styles for narrative-driven content creation.

Flux-2 Pro

Professional-grade text-to-image model from Black Forest Labs optimized for high-quality image manipulation, style transfer, and sequential editing workflows. FLUX.2 [pro] delivers exceptional detail in complex scenes with hyper-detailed textures, chiaroscuro lighting, and cinematic composition. Ideal for commercial production, fantasy art, detailed character renders, and professional creative work requiring maximum quality. Features adjustable safety tolerance for creative flexibility. Best for: hyper-detailed artwork, cinematic scenes, fantasy illustrations, medieval/historical imagery, armor and weapon detail, dramatic lighting compositions, commercial-grade visuals, concept art, and professional production work. Quality tier: Professional (highest detail and coherence).

Luma Dream Machine Ray-2-flash

Ray2 Flash is a fast video generative model capable of creating realistic visuals with natural, coherent motion.

Luma Photon

Next-generation text-to-image model by Luma Labs built on Universal Transformer architecture, designed to eliminate the 'AI look' with purpose-built creative aesthetics. Excels at superior prompt adherence and natural language understanding - accurately interprets complex descriptions and creative intent. Generates ultra-detailed imagery with exceptional textures, artifact-free composition, and photorealistic precision across diverse styles from cinematic visuals to artistic designs. Outperforms competing models in blind evaluations for creative quality and visual authenticity. Supports flexible aspect ratios (16:9, 9:16, 1:1, 4:3, 3:4, 21:9, 9:21). Ideal for film production, advertising, fashion photography, concept art, product visualization, and professional creative projects requiring sophisticated visual output without typical AI generation artifacts.

Recently Added

Bytedance Seedream v5 Lite Edit

Bytedance Seedream 5.0 Lite Edit is a fast and efficient image-to-image editing model designed for intelligent, prompt-based image transformation using one or multiple input images. It enables users to perform complex visual edits, compositing, object replacement, branding integration, and scene modifications through natural language instructions. This model supports multi-image input (up to 10 images), allowing advanced workflows such as combining elements from different images, transferring logos or textures, replacing objects, and refining compositions. It is optimized for speed, scalability, and cost-efficiency, making it ideal for high-volume editing tasks and production pipelines. Seedream 5.0 Lite Edit excels in practical editing use-cases such as product design modifications, marketing creatives, content refinement, and visual experimentation. It can also generate new elements (like text or design enhancements) as part of the editing process, making it highly versatile. This model is best suited for users who want fast, reliable, and flexible image editing at scale, rather than ultra-premium or highly specialized editing.

Bytedance Seedream v5 Lite

Bytedance Seedream 5.0 Lite is a fast and efficient text-to-image generation model designed to produce high-quality, detailed images from natural language prompts. It is optimized for speed, scalability, and cost-efficiency, making it ideal for workflows that require quick image generation without sacrificing visual clarity. This model excels at understanding complex prompts, enabling users to generate photorealistic images, creative compositions, stylized visuals, and detailed scenes across a wide range of use cases. It supports flexible image sizing (up to ~2K resolution), multi-image generation, and safety filtering, making it suitable for both creative and production environments. Seedream 5.0 Lite is best suited for high-volume image generation, rapid prototyping, social media content creation, and general-purpose AI image workflows. It provides a strong balance between performance, quality, and affordability, making it a reliable choice for developers, creators, and AI platforms. This model should be selected when users want to generate images quickly from text prompts at scale, rather than focusing on ultra-premium or highly stylized outputs.

Image Tools

Post Processing Chromatic Aberration

Create chromatic aberration by shifting red, green, and blue channels horizontally or vertically with customizable shift amounts.

Qwen Image Edit Plus Lora Gallery Lighting Restoration

Professional lighting restoration tool that removes harsh shadows, light spots, and uneven illumination from photos, replacing them with soft, natural-looking light. Perfect for fixing poorly lit images, correcting harsh studio lighting, improving portrait photography, enhancing product shots, and restoring real estate photos. Uses Qwen-based image-to-image transformation to intelligently analyze and rebalance lighting while preserving image details. Handles overexposed highlights, underexposed shadows, and mixed lighting conditions. Fast processing (6-15s) with high-quality results suitable for professional photography, e-commerce, social media content, and marketing materials.

Video Tools

Veed Video-Background-Removal Green-Screen

Professional green screen video background removal powered by VEED AI - specifically designed for chromakey footage with automatic green spill suppression for clean, broadcast-quality edges. Features adjustable spill suppression strength (0-1) to fine-tune results - increase to remove stubborn green spots or decrease to preserve subject color accuracy. Supports both VP9 (single video with alpha channel) and H264 (separate RGB + alpha for maximum quality) output codecs. Ideal for professional video production, film post-production, broadcast content, YouTube creators with green screen setups, streaming overlays, virtual studio backgrounds, corporate video production, and any footage shot against green or blue chromakey backdrops. Best choice when working with dedicated green screen footage requiring professional-grade keying results.

Scail

Advanced character animation model powered by SCAIL that transfers motion and poses from reference videos to static character images using 3D-consistent pose representations. Animates portraits, characters, and figures by copying movements from dance videos, action sequences, or performance footage while maintaining character identity and coherent motion. Perfect for creating dancing photos, animated avatars, character performances, social media content, marketing videos, and digital storytelling. Uses dual-input system requiring both reference character image and motion reference video to generate new animated video with transferred poses. Supports complex movements including full-body dance, athletic actions, expressive gestures, and coordinated motion sequences. Ideal for content creators, animators, social media influencers, marketers, and digital artists. Creates viral dancing photo effects, animated profile pictures, character demonstrations, and personalized video content. Maintains 3D spatial consistency and natural motion flow throughout animation. Processing generates 512p resolution video (896x512 landscape or 512x896 portrait) suitable for social media, presentations, and creative projects.

Audio Tools

Minimax Music 2.6

Minimax Music 2.6 is an advanced text-to-music generation model that creates complete, high-quality songs from a text prompt and optional lyrics. It can generate full tracks including vocals, background instruments, arrangement, rhythm, and production elements, making it ideal for end-to-end music creation workflows. The model allows users to describe the genre, mood, tempo, instrumentation, vocal style, and overall vibe, and optionally provide structured lyrics with sections like verses, chorus, and bridge. Based on this input, it produces a fully arranged audio track with coherent musical structure and expressive performance. Minimax Music 2.6 supports both vocal music generation (with singing) and instrumental-only tracks, making it suitable for a wide range of creative needs—from songwriting and music production to content creation and background scoring. It is best suited for creators, musicians, marketers, and AI pipelines that need ready-to-use music tracks, including songs, jingles, background music, and soundtrack-style compositions without manual production.

Minimax Preview Speech-2.5-hd

MiniMax Speech 2.5 HD - HIGH-DEFINITION text-to-speech optimized for LONG-FORM CONTENT with up to 5000 CHARACTERS per request. Supports 40+ LANGUAGES including Persian, Filipino, Tamil, Chinese, Japanese, Korean, Arabic, Hindi, and European languages with native pronunciation boost. Delivers STUDIO-QUALITY audio with full SPEED, VOLUME, and PITCH controls plus PRONUNCIATION DICTIONARY for specialized terminology. Features ENGLISH NORMALIZATION for consistent pronunciation. Perfect for LONG ARTICLES, DOCUMENTS, EBOOKS, BLOG POSTS, and extended narration requiring HD audio quality. Best choice when user needs to convert LARGE TEXT BLOCKS or long-form content to premium speech. Medium generation (10-30s).